IBM Cloud Virtual Private Cloud

The VPC project story is long, complex, and a good learning opportunity for me. Out of necessity, I am going to avoid telling it chronologically and highlight events, opportunities, and challenges from the journey.

Timeline: August 2017 - June 2019

Overview

The Virtual Private Cloud (VPC) project is the next evolution for the infrastructure offerings of IBM Cloud. Prior to the release of VPC, IBM Cloud Infrastructure was offering the same services as their acquisition, Softlayer, had been providing for years. The Softlayer suite of offerings were very similar management experiences to having a physical datacenter. Relative to competition, it just wasn’t as flexible, simple, or private.

VPC offerings were upgrades on the hardware and software of the Softlayer infrastructure. These upgrades made it easier for our clients to grow and evolve their applications while maintaining a more isolated environment.

The short, but still too long, backstory

The VPC project, codenamed Genesis, was one of the longest projects I have been on. While this project was one of innovation, exploration, and evolution of our offerings it was also filled with the complexities of communication, priorities, hopes, and fears.

Genesis was born from the need to evolve the flexibility and simplicity of our powerful physical technology to keep up with the expectations of the cloud market. This evolution was led by our Innovation Lab, equipped with an advanced new piece of network hardware to leap-frog our competition. In the early stages, the design of the GUI was being solely driven by my future design lead Megan Baxter.

While Megan had been working on VPC for around 6 months, I was working on our Networking Design Team. My primary focus was our new offerings CDN and Cloud Internet Services (See project). Our network team began getting notice of a new project codenamed “Wrigley” (after Wrigley Field, see IBM can be fun!).

While Megan had been working on Genesis for around 6 months, I was working on our Networking Design Team. My primary focus was our new Cloud Internet Services (See project) offering. At this time, our network team began getting notice of a new project codenamed “Wrigley”.

Wrigley was a new networking tech that was created for evolving the flexibility and simplicity of our powerful, physical technology...wait this sounds familiar.

As we were being on-boarded to this project, our design team immediately began noticing that Wrigley was targeting the exact use cases that Genesis was, albeit with a shorter time frame and with the goal of catching up to competition Vs. leap-frogging. Along with our design work, we began prioritizing communication between our teams to reduce double work and determine our go-to-market strategies.

Work on both projects continued in parallel while additional discussions were had. As designers working in close proximity, Megan and I made sure to operate with the same design paradigms in preparation for the eventual merging of the products. Or at least that is what we hoped in order to have a user experience that we would consider minimally viable.

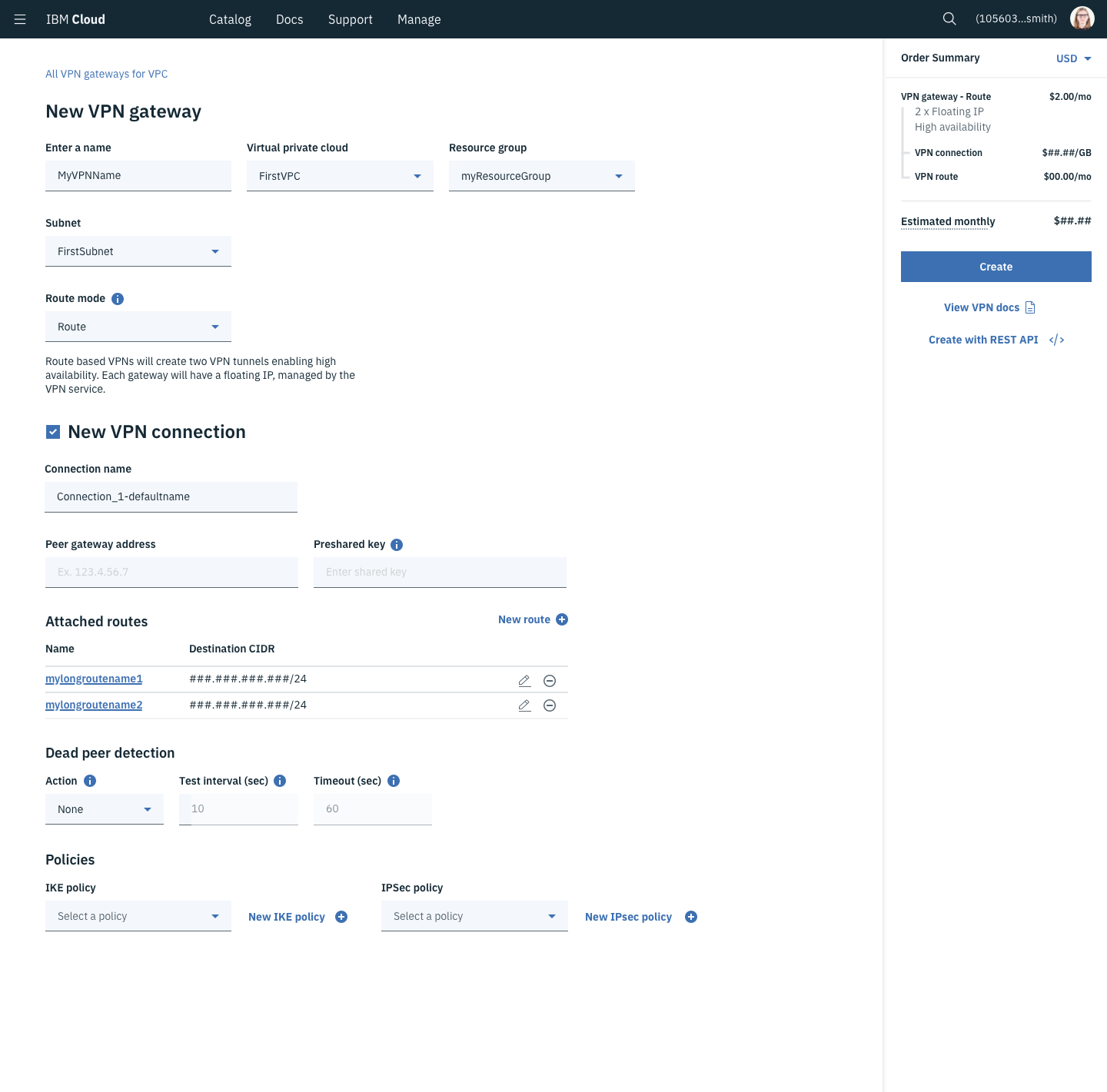

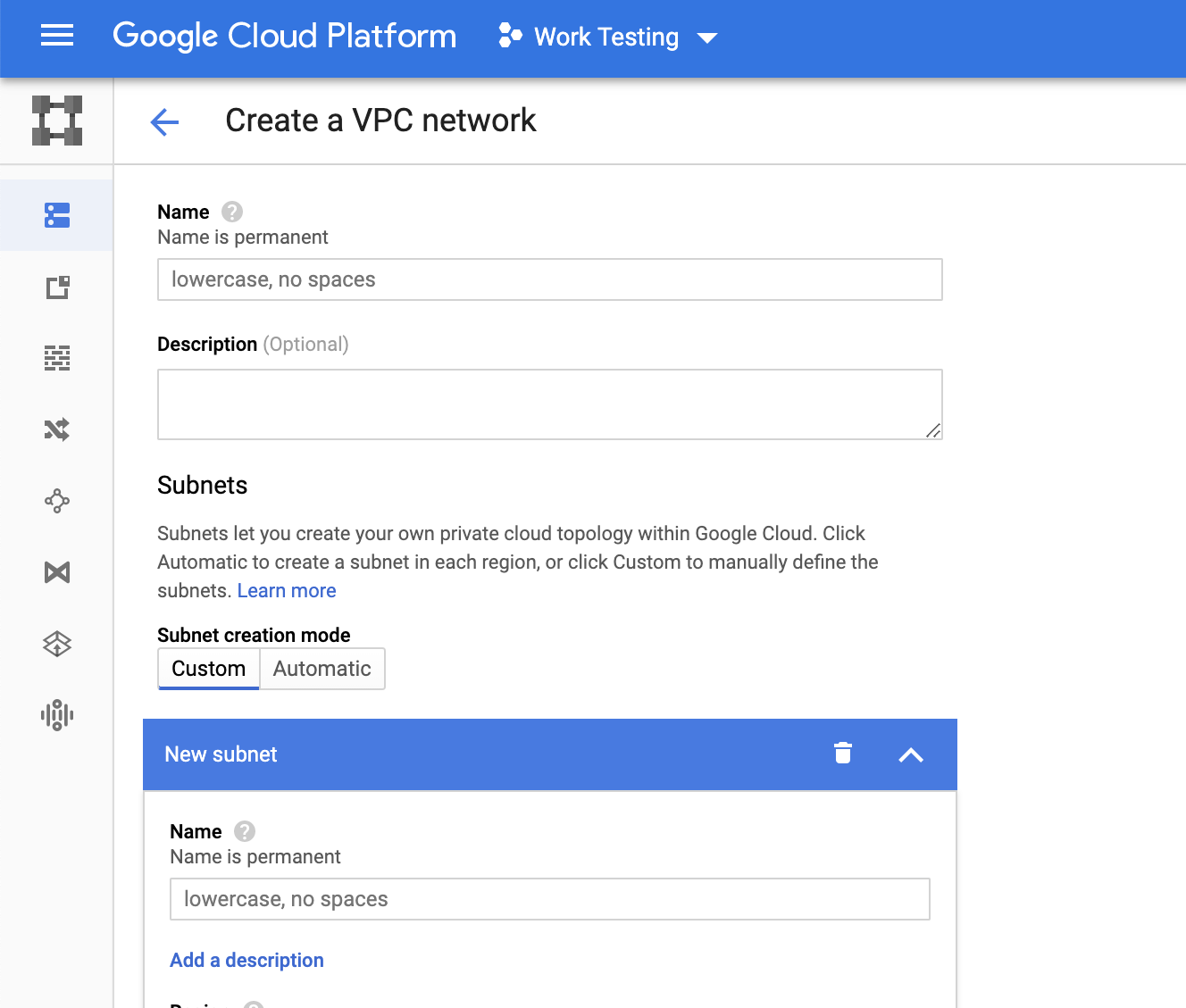

My service coverage

Virtual Private Cloud - Work on both projects continued in parallel while additional discussions were had. As designers working in close proximity, Megan and I made sure to operate with the same design paradigms in preparation for the eventual merging of the products. Or at least that is what we hoped in order to have a user experience that we would consider minimally viable.

Subnets - A subset of your VPC. This network designates a range of IP addresses other services can use to communicate. Individual subnets operate as communication zones for everything in the subnet, but a client can place security requirements when communicating in and out of the subnet.

Public Gateway - A service placed on a subnet to allow services on that subnet to initiate communication to the public internet through this gateway.

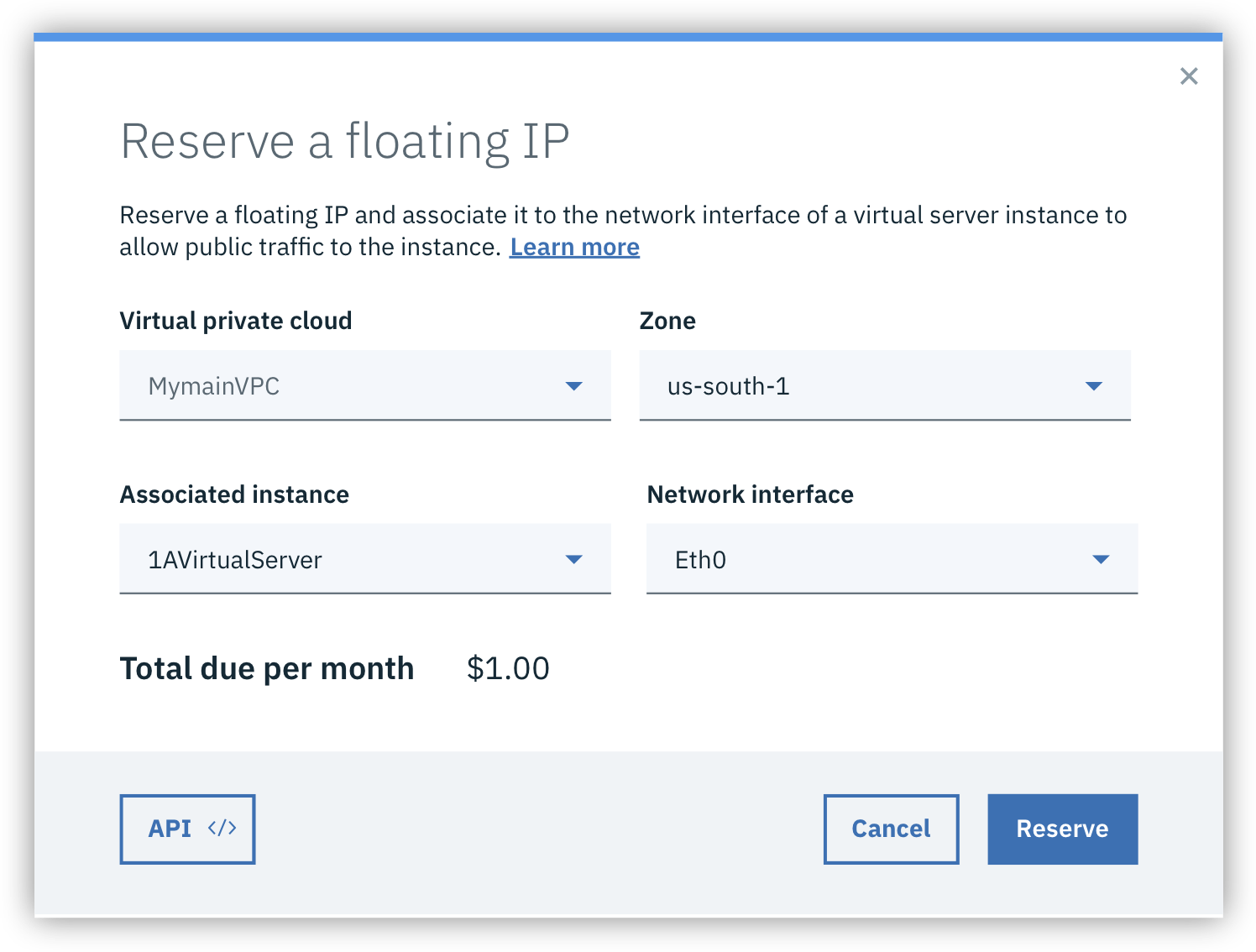

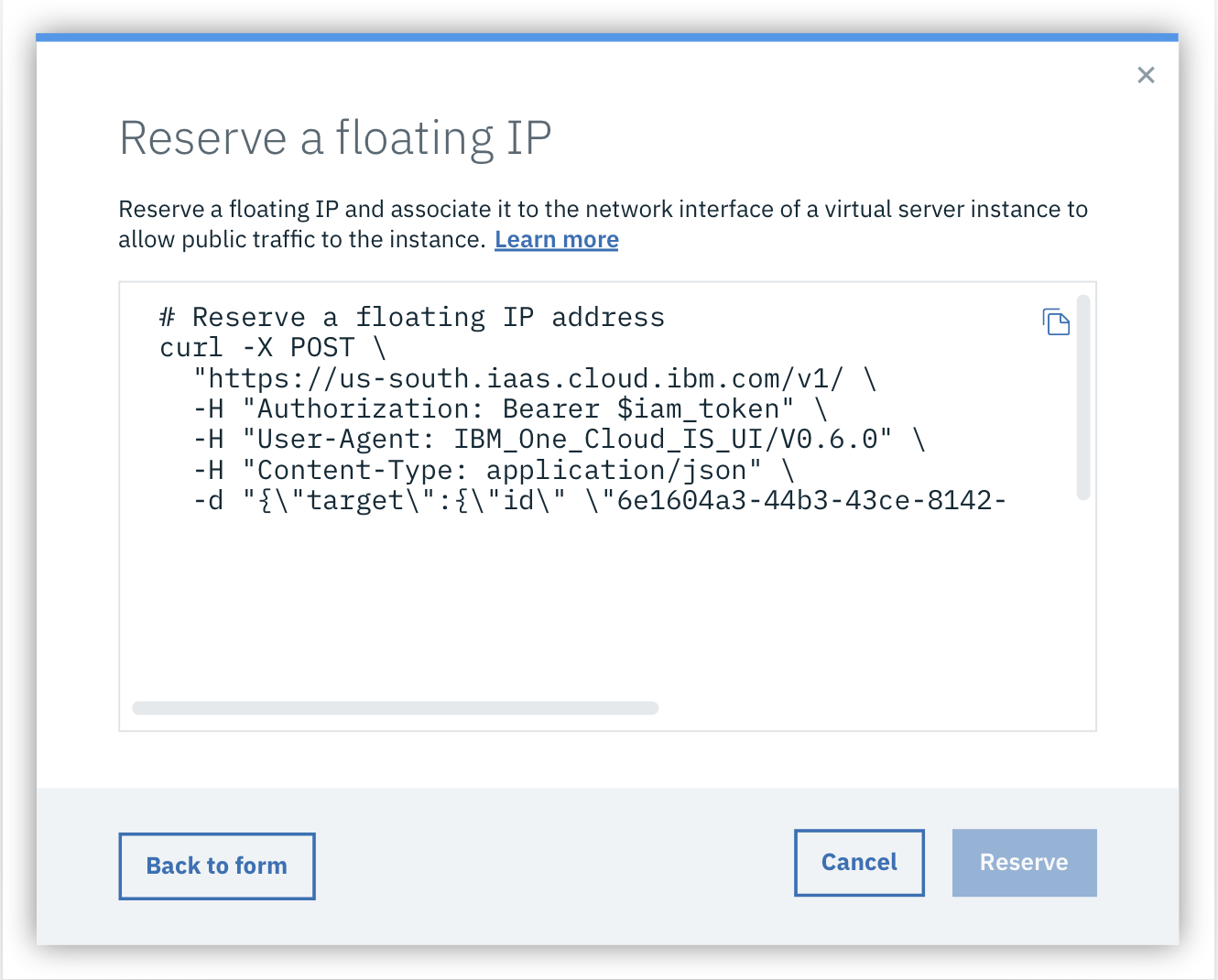

Floating IP - A publicly addressable IP address placed on a Virtual Server’s Network Interface Card. Use this to make an application, like a website, reachable.

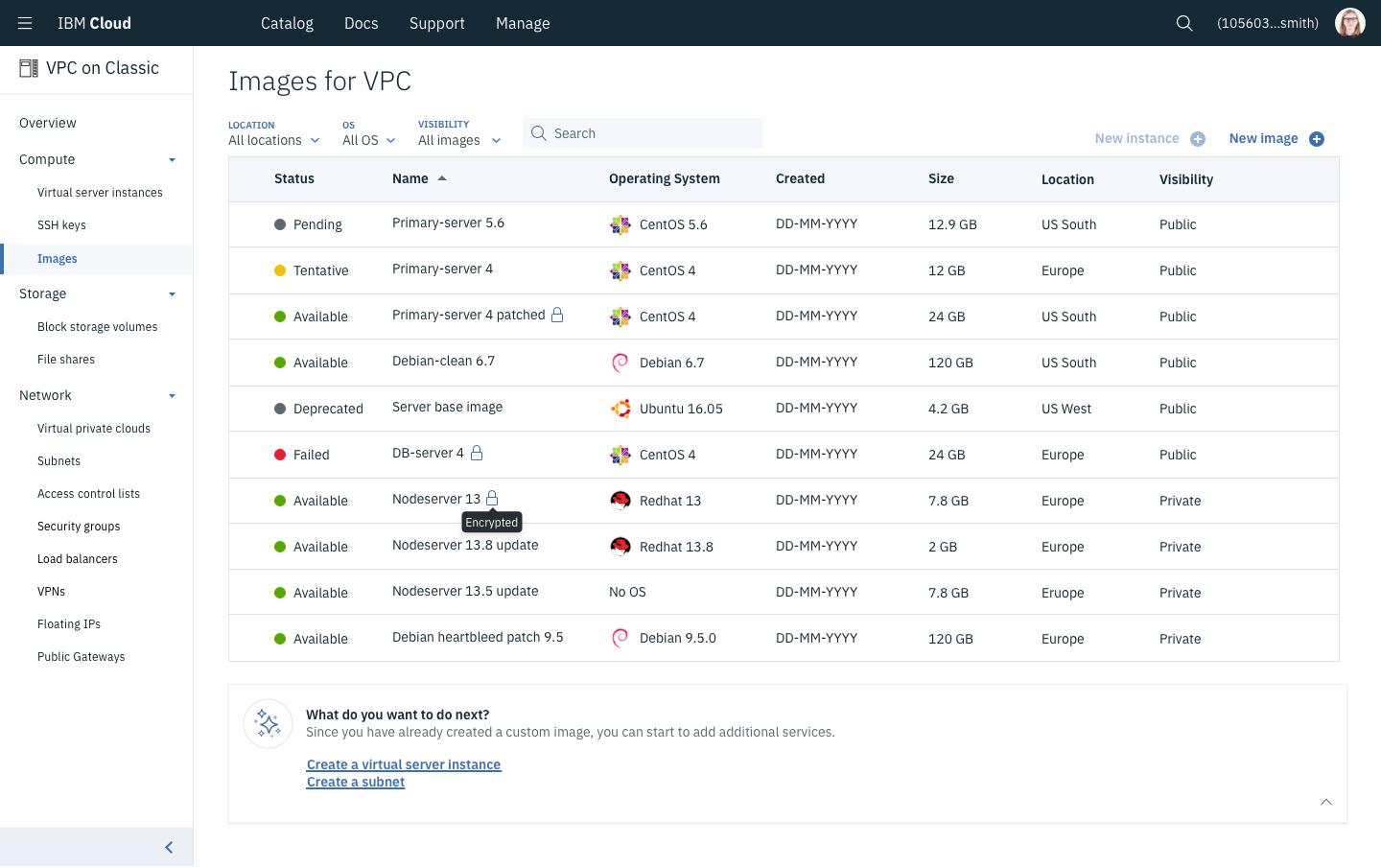

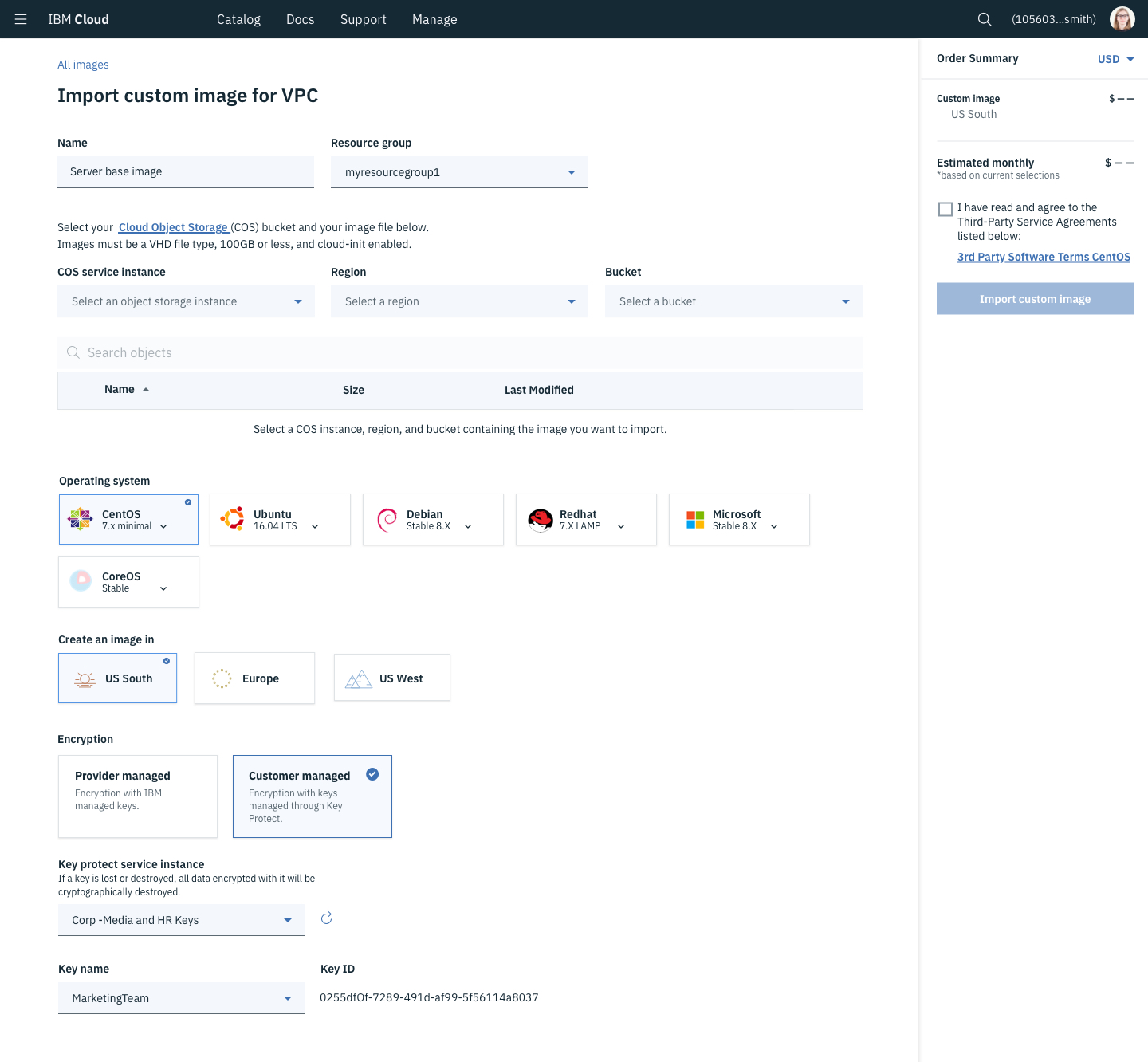

Custom Images - Custom images are a copy of everything on a computer. That includes the OS and anything saved to that computer. To operate at scale, clients create and destroy massive numbers of Virtual Servers. Setting up a blank server can take hours, so clients create and upload an “image” that contains everything important to run their application.

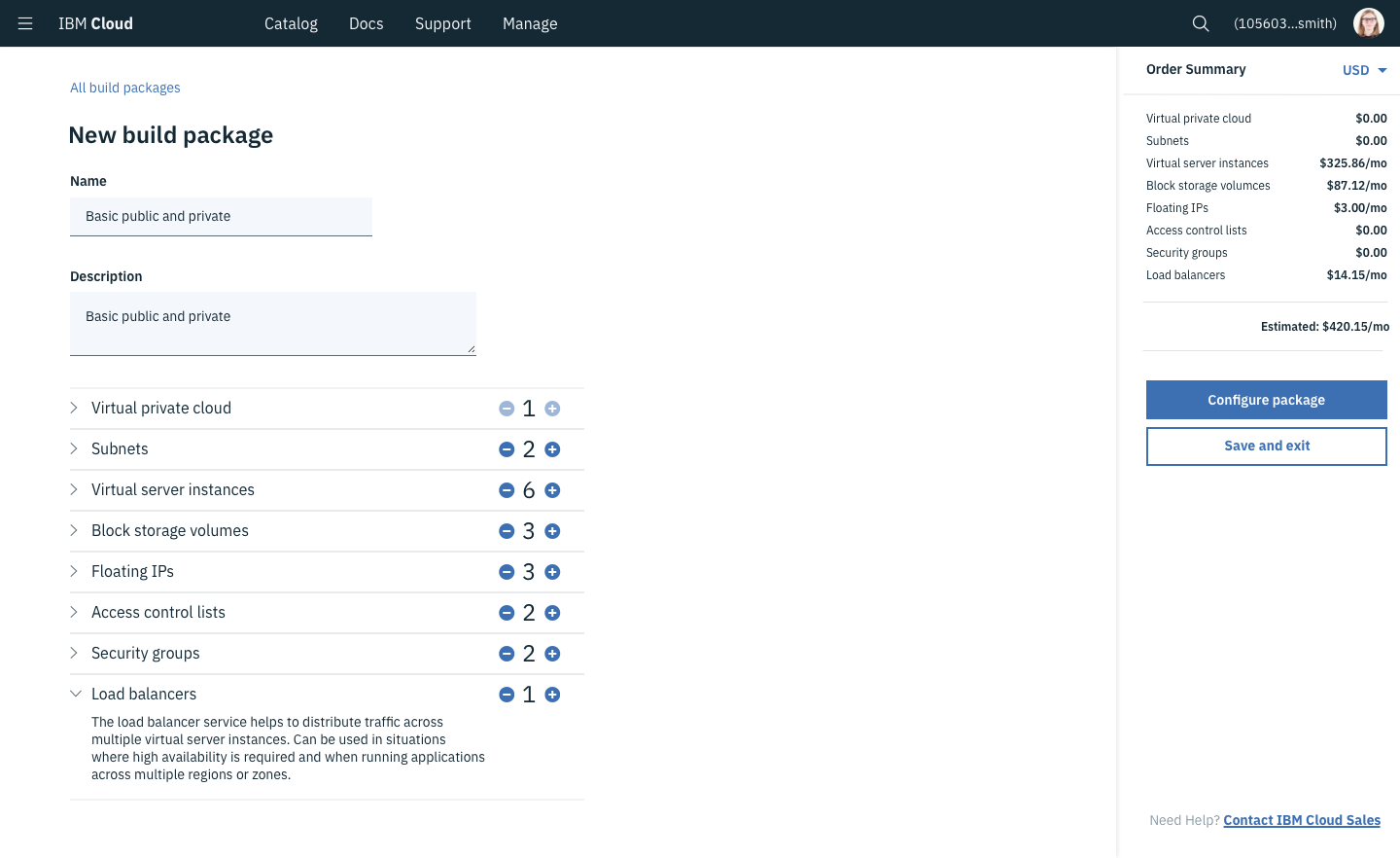

Load Balancers - A network traffic distribution strategy to help balance the amount of traffic going to any individual compute instance. Used for reducing outages, improving response times, and various deployment strategies.

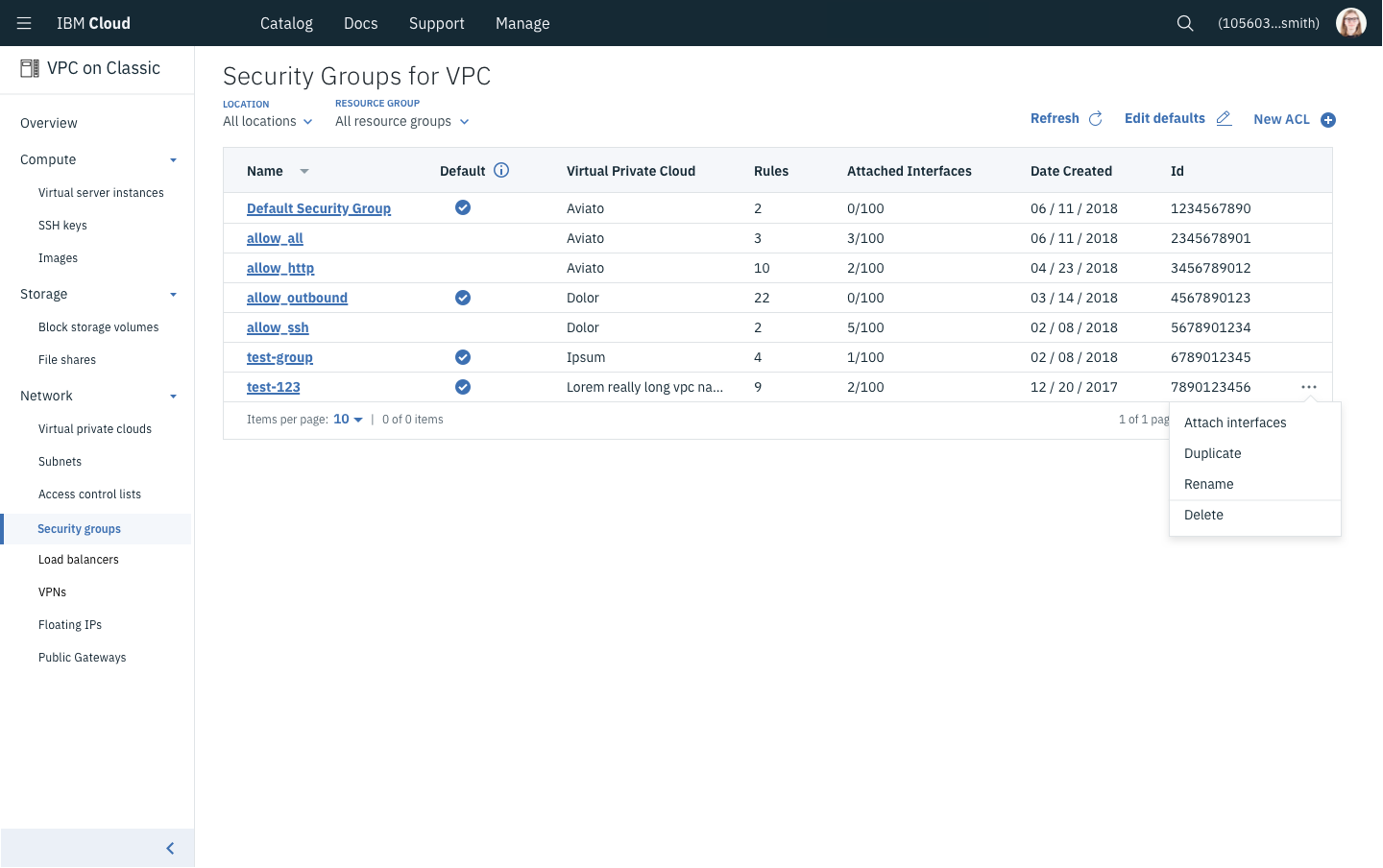

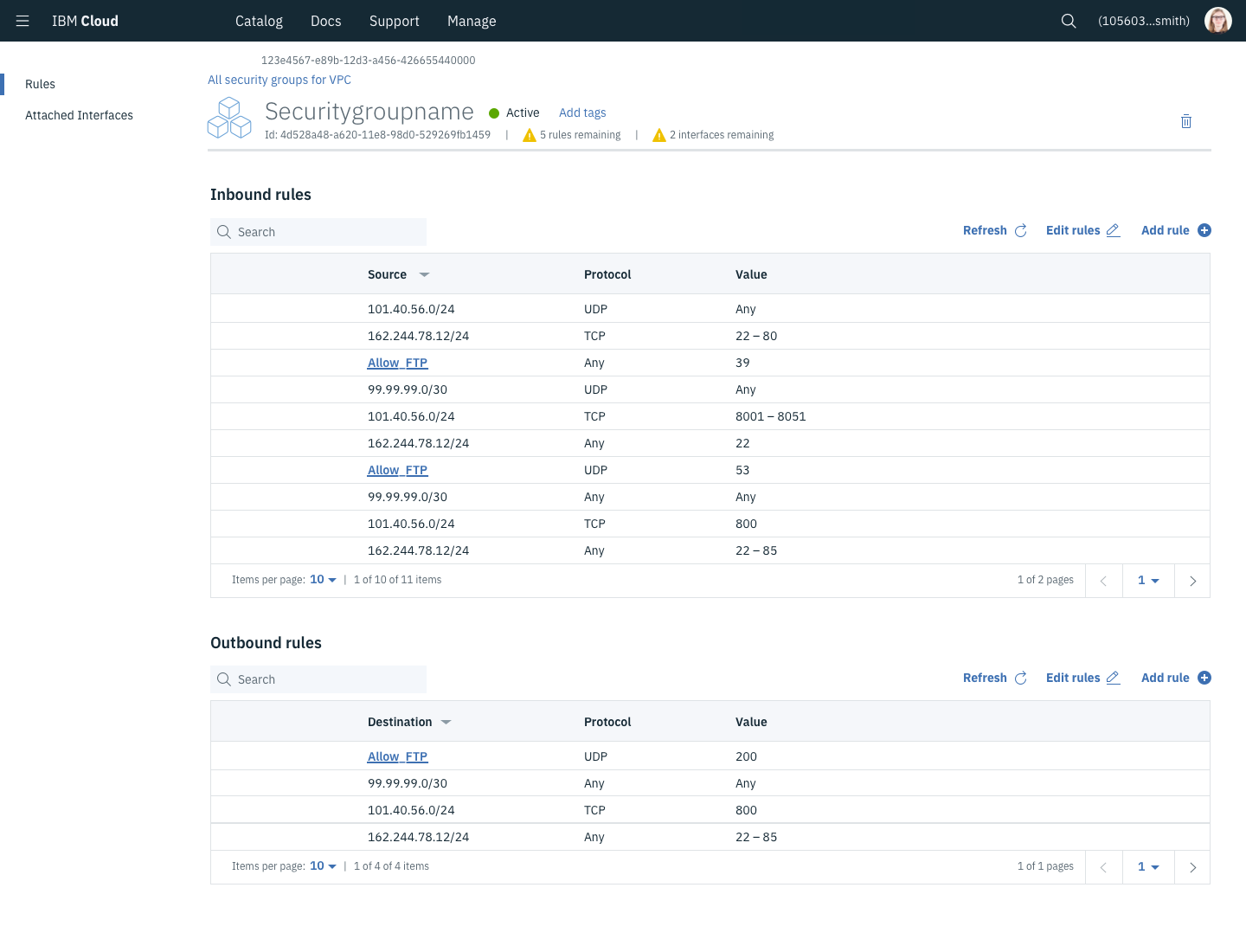

Security Groups/Access Control Lists - These two constructs are fairly similar but are applied at different scales. ACLs are made to control the allowed inbound and outbound traffic on an entire subnet while Security Groups do roughly the same but on a compute instance’s network card. Both are used to segment and control traffic between various networked devices.

Market Understanding

My go-to on any project is to review the rest of the market. Comparing 3-4 competitors in the same space and identifying what they heuristically did well provides a broad understanding of user expectations. Additionally, seeing how their technology functionally worked helped our team to propose ideas to our architects so that we could get the right interactions and information in the right places before things were set in stone.

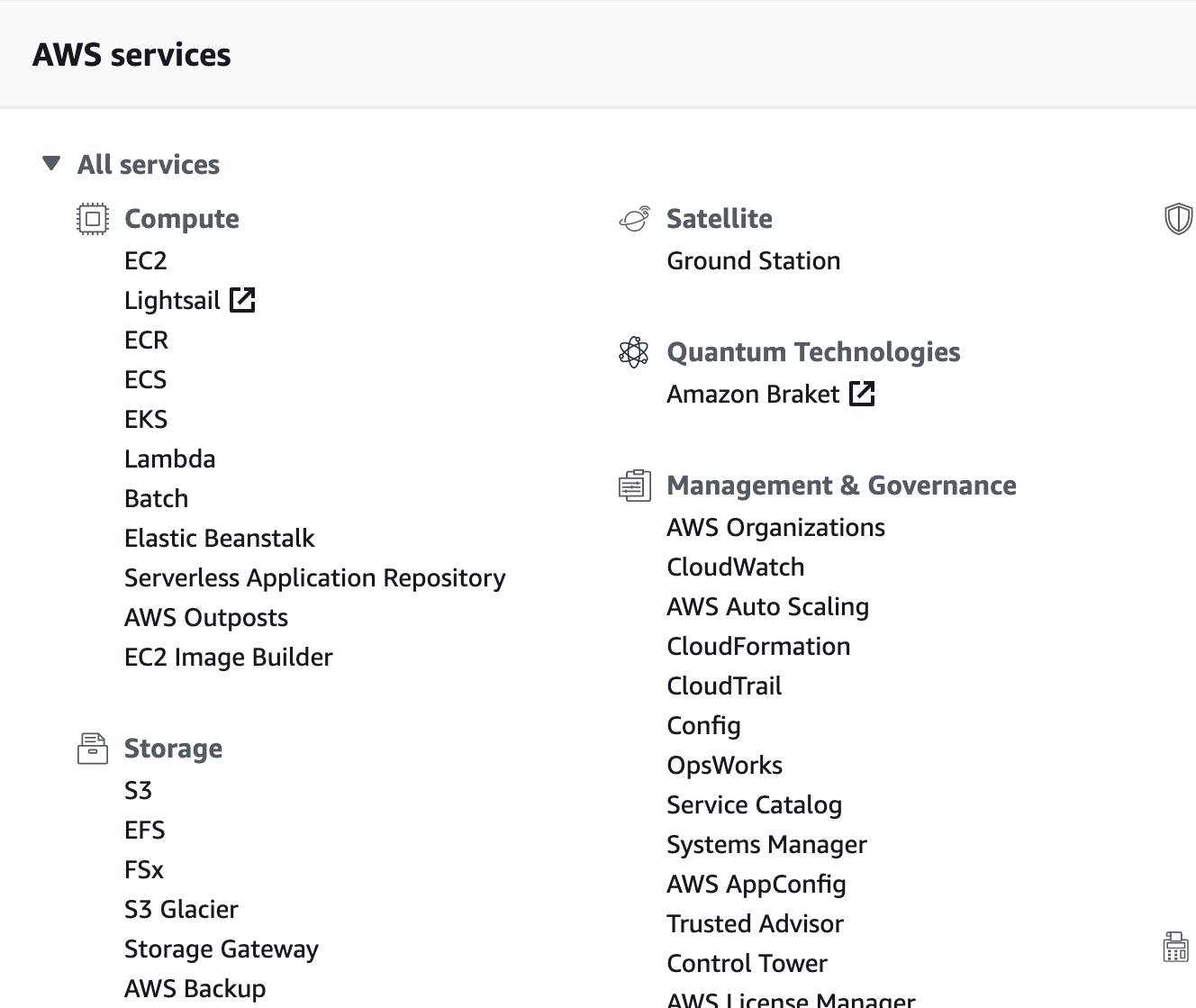

In this space, the primary competitors were Azure, AWS, and Google Cloud. For example, AWS provided a relatively flat layout that helped us to associate different services together. Google Cloud gave us insight into a more extreme simplification of services as well as their Global VPC and the benefits that provided. Our architecture teams primarily looked at AWS for guidance even though Azure and Google Cloud innovated more recently and by comparing them, we were able to bring technological insights to our teams.

User Feedback Methods

We had 3 consistent sources of user feedback throughout the project. The first were our internal beta testers who were perfect for brainstorming ideas. Then usertesting.com for external validation and catching simple oversights. Finally, our more critical, but slightly harder to access source was our whitelisted, early access external clients.

Something fun about having legitimate reasons to talk to internal users, is that they will not hold back. When we had questions, assumptions, or designs, we would take them to an internal Slack channel specific to this user group. Because it was internal, we often took less refined, more explorative design ideas there to have quick iterations and gut checks.

Our internal teams tended to enjoy the GUI, but focused on having a powerful CLI and API. One of our most impactful discoveries from this group was the importance of offering the CLI/API command for an action, directly in the GUI. Seeing how clickable inputs affected a CLI/API command allowed them to learn how to use it, as well as immediately take their work from the GUI and make it scale productively.

Usertesting.com was primarily used with our Invision prototypes. As we narrowed in on ideas and built them into thorough prototypes, we ran them through usertesting.com. These tests would often highlight issues with things like terminology and strengthen our arguments when user flows were too complex. Unfortunately, UT did have significant limitations due to the nature of hotpot based prototypes and needing to use simple prompts to guide users of varying degrees of expertise.

As for our “Whitelisted external clients”, this was a set of users already using IBM technologies and directly in contact with our sales teams. They were being provided with a “white glove” experience where day or night they can get IBM experts assistance. These groups of users could start or stop a line of thinking and reprioritize the roadmap if they were adamant enough. Feedback from whitelisted clients frequently came through the sales teams who showed in-progress development to the client. That information was passed to our project managers who would give the feedback to architects and design teams. Unfortunately there wasn’t a strong design-to-client feedback loop here despite our efforts.

Think 2018

The VPC project was a huge talking point for our clients. As we drew closer to our expected launch date, our design teams saw an opportunity for massive, direct client feedback through the IBM Think conference. An enormous event where IBM brings together its leadership to speak about our work to existing and potential clients. Our design team, with the help of our researchers, were able to reserve an “Inner Circle” event room for 2 days of the conference. Inner Circle was an IBM Clients and approved personnel only section.

In preparation for this event, I was in charge of creating a prototype that was thoroughly connected between our 10+ products. It had to enable us to move to any product that our clients were interested in and experience what using those products was going to be like. In summary, this was a 200+ screen prototype on Invision.

With the work on Genesis, Wrigley, and our existing Softlayer network, IBM had 3 separate network infrastructures that did not easily communicate. At Think, one of our major questions was “How do users want to manage these separate worlds?” Did they want a single place to do everything? Or, if the worlds didn’t communicate (which they didn’t), did users want them kept in separate experiences.

Our research plan was to organize a signup schedule with clients who would come in for 15 minute 1:1 user tests. However, we arrived on the first day a little late. As we opened the door, we discovered a room full of project leaders seated and waiting. Thinking fast, our research lead Diana Harrelson began managing the room while our team setup the projector and prototype that we had intended to test 1:1.

Diana requested a member of the audience to operate the prototype in the front of the room while they, and other audience members, provided feedback. We had around 8 active participants in that event and were able to move through our VPC, Subnet, and Virtual Server flows.

The next day, we were able to organize the 1:1 sessions with a timesheet. We had 3 people, myself included, at individual tables with a laptop for a user to test on. We would work in 15-30 minute segments with a user and let them interact with the prototype while we provided guided prompts.

Overall, we were able to get ~20 participants, feedback on information layouts and priorities, and a pretty strong answer to our big question. Our users felt that if the networks didn’t communicate, the benefits of having resources shown together in a single location did not outweigh the confusion and security risks that may cause. This allowed us to significantly simplify the VPC experience and not be restricted by our legacy technologies abilities. Improving the on-boarding experience and making it easier for marketing to say “Use the new stuff without worrying about the old”.

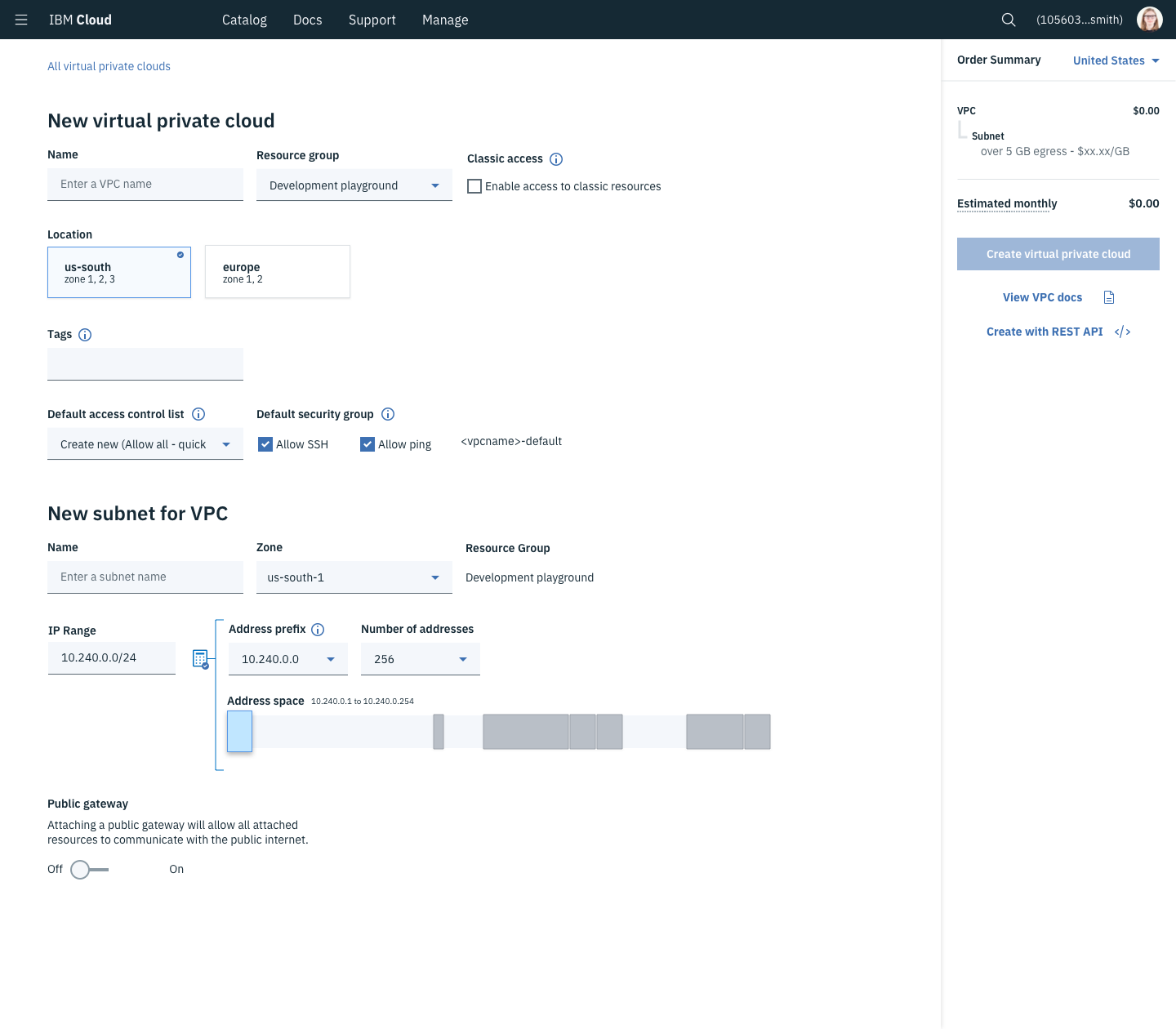

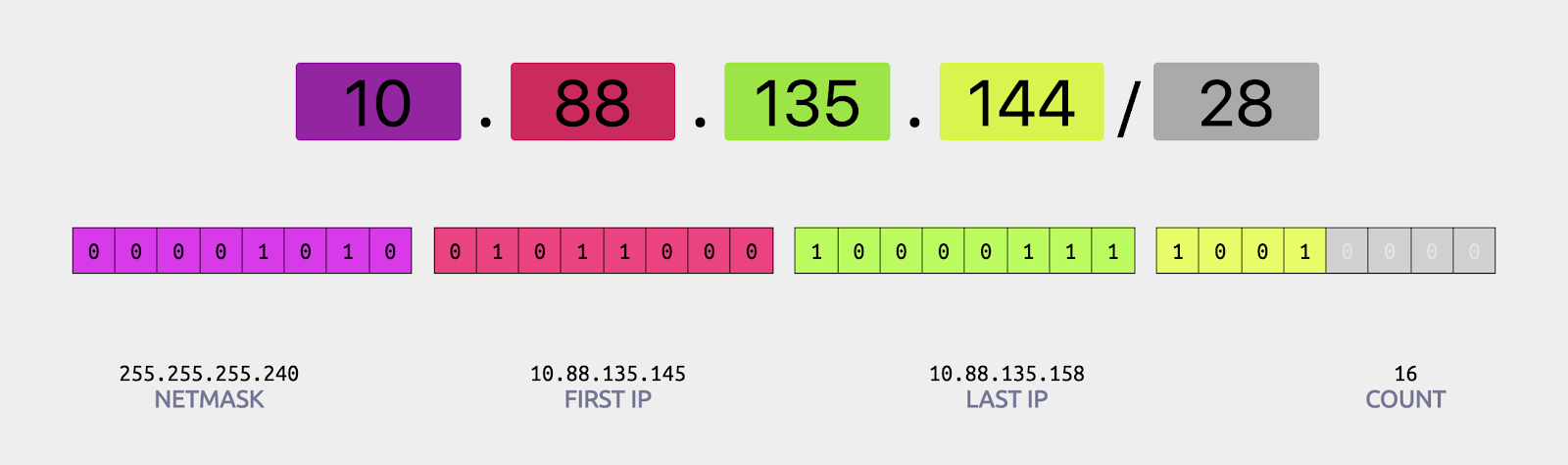

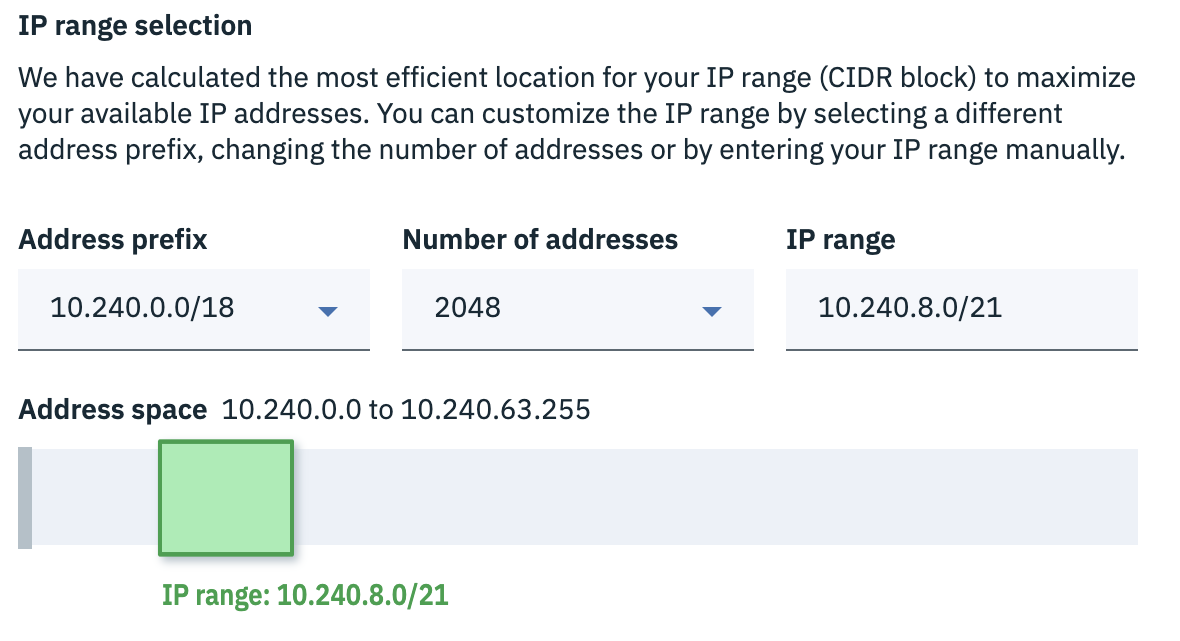

Moments of delight: IP Range Visualization

The core of an applications infrastructure is its network which is broken into a series of groups of IP addresses called “Subnets”. Subnets are generally notated in an “IP Range” or “CIDR” format such as 192.168.0.0/24. That converts to the range 192.168.0.1 to 192.168.0.254 for a total of 256 IP addresses that can be connected to 256 network devices such as a computer. The trick to this is that IP ranges aren’t allowed to overlap. While simple arrangements of networks are relatively easy to navigate, more complex situations become exhausting and unpleasant to work with.

Through a brainstorming session with our development team and designers, we identified that while many network architects have grown to accept this process, we could feasibly make it simpler for those that prefer. Our team arranged a series of sessions where our team of 4 designers diverged and converged, worked with our development teams, and ultimately built an IP Range visualization and selector.

While I was a participant in the designs, I tried to be the touchpoint for the technical details. My understanding came from reading articles and studying portions of a “AWS Certified Solutions Architect” course. To help our team understand the problem and feel more confident in their solutions, I organized a quick crash course for our team that went over IP range formation and the math behind it.

Where competitors offer only a textbox to enter in a proper IP range without conflicts, we went a step further. When the user begins creating a network, we prefill a non-conflicting IP range that fills the next logical “block” of the network. Without thinking, the user can continue on. However, for those who need to customize it, they may manipulate the IP range with a few clicks and see how it fits into the network. For those who prefer manually entering a value, they can still do that, but with the added bonus of visual feedback rather than an error message.

Our solution is still in its infancy with room to grow, but it is already filed as a patent shared between our design and development team.

Biggest Challenges

The most significant challenges of VPC starts with the length of the project. Being stuck in a state of theoretical functionality and prototypes for as long as it was, opened the whole team up for opportunities of redesign and rework that was rarely based on a need. The project became so built up in our heads and the pressure from the industry was crushing because every day we didn’t release, the expectation that the final product had to be even better increased. When really, the delays were caused by realizing we couldn’t achieve a certain goal that quickly.

Because the project was multiple years, but with the expectation that it was always “launching next quarter”, the project teams morales fluctuated heavily. As a result, team members left, leadership reorganized, people were brought in, etc. Every time this happened, the next person re-sorted the roadmap to more realistically “launch VPC next quarter.” It was difficult to track what services or functionality in what technology generation were going to launch at what date.

The last challenge was simply the technology. From previous projects I had experience with many of the concepts presented to me in VPC. However, over the course of the project I was introduced to more complex use cases, tighter integration between services, and understanding how Genesis and Wrigley technologies differed. I was able to tackle this by working frequently with our development teams and reading competitors as well as our documentation on the subjects. I also supplemented this by watching relevant sections of a “AWS Certified Solutions Architect” course.

Results

IBM Cloud Virtual Private Cloud was released in June 2019. It was long awaited by our company and our clients. The team is currently monitoring our NPS scores and feedback and arranging conversations with customers to see where we can continue improving.

Related Links

Images